- University of Tübingen

Maria-von-Linden Strasse 6

72076 Tübingen - Room 20-7/A18

- vguzov@mpi-inf.mpg.de, vladimir.guzov@uni-tuebingen.de

| @vguzov | @guzov_vladimir |  |

About Me

I am a PhD student at the Real Virtual Humans group within the Department of Computer Science at the University of Tübingen and the department of Computer Vision and Machine Learning at the Max Planck Institute for Informatics, under supervision of Prof. Dr. Gerard Pons-Moll. I have done my Bachelor and Master research in the field of human reconstruction from depth images at Moscow State University Graphics and Media Lab. I am exploring a subject of human-object interactions and human motion capturing.

Research Interests

- Human body reconstruction

- Human performance capturing

- Camera and object localization

Recent Awards

- Our Human POSEitiong System publication was shortlisted for the Best paper award at CVPR 2021

Publications

Xiaohan Zhang,

Bharat Lal Bhatnagar,

Sebastian Starke,

Ilya A. Petrov,

Vladimir Guzov,

Helisa Dhamo,

Eduardo Pérez Pellitero,

Gerard Pons-Moll

FORCE: Dataset and Method for Intuitive Physics Guided Human-object Interaction

in International Conference on 3D Vision (3DV), 2025.

FORCE: Dataset and Method for Intuitive Physics Guided Human-object Interaction

in International Conference on 3D Vision (3DV), 2025.

Vladimir Guzov,

Yifeng Jiang,

Fangzhou Hong,

Gerard Pons-Moll,

Richard Newcombe,

C. Karen Liu,

Yuting Ye,

Lingni Ma

HMD^2: Environment-aware Motion Generation from Single Egocentric Head-Mounted Device

in International Conference on 3D Vision (3DV), 2025.

HMD^2: Environment-aware Motion Generation from Single Egocentric Head-Mounted Device

in International Conference on 3D Vision (3DV), 2025.

Lingni Ma,

Yuting Ye,

Fangzhou Hong,

Vladimir Guzov,

Yifeng Jiang,

Rowan Postyeni,

Luis Pesqueira,

Alexander Gamino,

Vijay Baiyya,

Hyo Jin Kim,

Kevin Bailey,

David S. Fosas,

C. Karen Liu,

Ziwei Liu,

Jakob Engel,

Renzo De Nardi,

Richard Newcombe

Nymeria: A Massive Collection of Multimodal Egocentric Daily Motion in the Wild

in European Conference on Computer Vision (ECCV), 2024.

Nymeria: A Massive Collection of Multimodal Egocentric Daily Motion in the Wild

in European Conference on Computer Vision (ECCV), 2024.

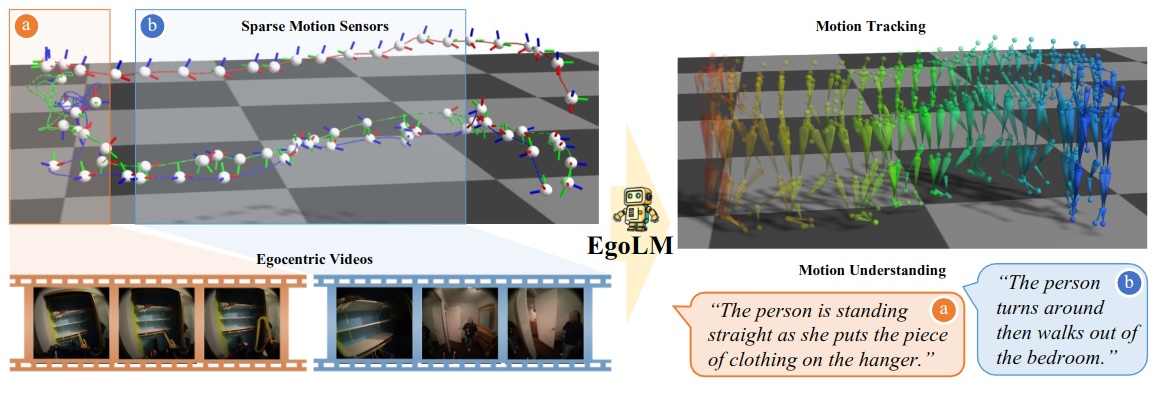

Fangzhou Hong,

Vladimir Guzov,

Hyo Jin Kim,

Yuting Ye,

Richard Newcombe,

Ziwei Liu,

Lingni Ma

EgoLM: Multi-Modal Language Model of Egocentric Motions

in Arxiv, 2024.

EgoLM: Multi-Modal Language Model of Egocentric Motions

in Arxiv, 2024.

Vladimir Guzov,

Ilya A. Petrov,

Gerard Pons-Moll

Blendify - Python rendering framework for Blender

in Arxiv, 2024.

Blendify - Python rendering framework for Blender

in Arxiv, 2024.

Xiaohan Zhang,

Sebastian Starke,

Vladimir Guzov,

Helisa Dhamo,

Eduardo Pérez Pellitero,

Gerard Pons-Moll

SCENIC: Scene-aware Semantic Navigation with Instruction-guided Control

in Arxiv, 2024.

SCENIC: Scene-aware Semantic Navigation with Instruction-guided Control

in Arxiv, 2024.

Vladimir Guzov,

Julian Chibane,

Riccardo Marin,

Yannan He,

Yunus Saracoglu,

Torsten Sattler,

Gerard Pons-Moll

Interaction Replica: Tracking human–object interaction and scene changes from human motion

in International Conference on 3D Vision (3DV), 2024.

Interaction Replica: Tracking human–object interaction and scene changes from human motion

in International Conference on 3D Vision (3DV), 2024.

Verica Lazova,

Vladimir Guzov,

Kyle Olszewski,

Sergey Tulyakov,

Gerard Pons-Moll

Control-NeRF: Editable Feature Volumes for Scene Rendering and Manipulation

in Winter Conference on Applications of Computer Vision (WACV), 2023.

Control-NeRF: Editable Feature Volumes for Scene Rendering and Manipulation

in Winter Conference on Applications of Computer Vision (WACV), 2023.

Xiaohan Zhang,

Bharat Lal Bhatnagar,

Sebastian Starke,

Vladimir Guzov,

Gerard Pons-Moll

COUCH: Towards Controllable Human-Chair Interactions

in European Conference on Computer Vision (ECCV), 2022.

COUCH: Towards Controllable Human-Chair Interactions

in European Conference on Computer Vision (ECCV), 2022.

Vladimir Guzov,

Aymen Mir,

Torsten Sattler,

Gerard Pons-Moll

Human POSEitioning System (HPS): 3D Human Pose Estimation and Self-localization in Large Scenes from Body-Mounted Sensors

in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2021.

(First two authors contributed equally)

Oral, Best paper finalist

Human POSEitioning System (HPS): 3D Human Pose Estimation and Self-localization in Large Scenes from Body-Mounted Sensors

in IEEE Conference on Computer Vision and Pattern Recognition (CVPR), 2021.

(First two authors contributed equally)

Oral, Best paper finalist