Our research is at the intersection of computer vision, computer graphics and machine learning--we develop computational algorithms to efficiently digitize people and train machines to perceive people from visual data.

Current computer vision algorithms can detect people in images or estimate 2D keypoints to a remarkable accuracy. However, people are far more complex–-we effortlessly sense other people's emotional state based on facial expressions and body movements, or we make guesses about people's preferences based on what clothing they wear. Our goal is to build virtual humans that look, move and eventually think like real ones.

For all enquiries please contact:

Violaine Le Guily

Administrative Assistant

+49 (0)7071/29-70570

violaine.le-guily@graphics.uni-tuebingen.de

News

6 papers accepted at CVPR'26

Feburary 2026

1) CARI4D for 3D human-object interaction reconstruction, 2) ELITE: Efficient Gaussian Head Avatar, 3) PhysHead: Simulation-Ready Head Avatars, 4) GeoRelight: Geometrical Relighting and Reconstruction, 5) FrankenMotion: Part-level Human Motion, 6) MoLingo: Motion-Language Alignment Congratulations students and collaborators, more details coming soon!

SMPL wins SIGGRAPH Asia'25 Test of Time Award

October 2025

The SIGGRAPH Asia 2025 Test of Time award has been awarded to SMPL. It is a prestigious recognition given to papers with significant and lasting impact on the field of computer graphics. Congratulations to Gerard Pons-Moll and his collaborators!

SMPL receives Everingham Prize at ICCV'25

October 2025

10 years after its publication, SMPL has been awarded the PAMI Mark Everingham Price at ICCV'25. The prize is given annually to researchers who have made contributions of significant benefit to the computer vision community. Congratulations to Gerard Pons-Moll and his co-authors Michael J. Black, Matthew Loper, Naureen Mahmood, and Javier Romero!

2 papers accepted at ACM SIGGraph Asia'25

October 2025

1) InfiniHuman can create infinite 3D humans with precise control. 2) PhySIC reconstructs physically plausible 3D human-scene interactions from a single image. Congratulations students and collaborators!

Gen-3Diffusion accepted at IEEE TPAMI.

June 2025

Gen-3Diffusion: Realistic Image-to-3D Generation via 2D & 3D Diffusion Synergy is accepted at TPAMI 2025. Congratulations Yuxuan and collaborators!

Workshop on 3D Vision and Graphics

March 2025

We are organizing the first ELLIS workshop on 3D Vision and Graphics, March 20,21 in Tübingen, Maria-von-Linden-Straße 6.

Feat2GS accepted at CVPR'25.

March 2025

Feat2GS:Probing Visual Foundation Models with Gaussian Splatting is accepted at CVPR'25 Congratulations students and collaborators!

4 papers accepted at 3DV'25.

November 2024

Topics are: 1) Physics Guided Human-object Interaction, 2) Environment-aware Motion Generation from Egocentric View, 3) Tracking Human Object Interaction without Object Templates, and 4) Unifying 3D Human Motion Synthesis and Understanding Congratulations students and collaborators!

Neural ICP for 3D Human Registration at ECCV2024.

July 2024

Riccardo Marin will present NICP: Neural ICP for 3D Human Registration at Scale at ECCV, Milan, 2024 .

4 papers accepted at CVPR'24.

February 2024

Topics are: 1) Template Free Reconstruction of Human-object Interaction with Procedural Interaction Generation, 2) Neural Riemannian Distance Fields for Learning Articulated Pose Priors, 3) Text-to-Texture Synthesis via Deep Convolutional Texture Map Optimization and Physically-Based Rendering and 4) Local Geometry-aware Hand-object Interaction Synthesis. Congratulations students and collaborators!

4 papers accepted at 3DV'24.

October 2023

Topics are: 1) Generating Continual Human Motion in Diverse 3D Scenes, 2) Tracking human–object interaction and scene changes from human motion, 3) Controllable Personalized GAN-based Human Head Avatar and 4) A 3D Clothing Segmentation Dataset and Model. Congratulations students and collaborators!

Neural Surface Fields for Human Modeling from Monocular Depth at ICCV2023.

Aug 2023

Yuxuan Xue will present Neural Surface Fields for Human Modeling from Monocular Depth at ICCV, Paris, 2023 .

Best Paper Honourable Mention at SCA2023!!

Aug 2023

Congratulations to Marc Haberman, Lingjie Liu, Weipeng Xu, Gerard Pons-Moll, Michael Zollhoefer and Christian Theobal for receiving the Best Paper Honorable Mention at SCA'23 for the paper HDHumans: A Hybrid Approach for High-fidelity Digital Humans.

István Sárándi joins as Postdoctoral Researcher

March 2023

István Sárándi joins our group from March 2023 as a Postdoctoral researcher.

Best Paper Honourable Mention at ECCV2022!!

Oct 2022

Congratulations to Garvita Tiwari, Dimitrije Antic, Jan Eric Lenssen, Nikolaos Sarafianos, Tony Tung and Gerard Pons-Moll for receiving the Best Paper Honorable Mention at ECCV'22 for the paper Pose-NDF: Modeling Human Pose Manifolds with Neural Distance Fields. 3 paper awards were given out of 6773 submissions.

EG-Italy Best Thesis Award!

Oct 2022

Congratulations for Riccardo Marin for winning the EG-Italy Best Thesis Award! His PhD thesis "Merging, extending and learning representations for 3D shape matching" exploits, extends, and proposes representations to establish correspondences in the non-rigid domain.

ECCV 2022 Outstanding Reviewers

Oct 2022

Bharat Lal Bhatnagar and Keyang Zhou are Outstanding Reviewers at ECCV22.

7 Papers(2 Orals) accepted at ECCV2022!

July 2022

Topics are: 1) Single image reconstruction of humans and objects, 2) Contact-driven human motion synthesis, 3) Learning a pose manifold, 4) Re-targeting human motion to non-human characters, 5) Human-hand interaction, 6) Learning based model fitting and 7) Weakly supervised 3D instance segmentation(Oral). Congratulations students and collaborators!

Code, data and papers will be available here.

Riccardo Marin will join as Postdoctoral Researcher

May 2022

Riccardo Marin will join our group from July 2022 as a Postdoctoral researcher and is supported by Humboldt Fellowship.

CVPR 2022 Outstanding Reviewers

May 2022

Bharat Lal Bhatnagar, Garvita Tiwari, Julian Chibane, Ilya Petrov and Riccardo Marin are Outstanding Reviewers at CVPR22.

Facebook / Meta Research Fellowship Award

Feb 2022

Congratulations to Julian Chibane to be selected for the prestigious Facebook / Meta research fellowship award for his work on capture and synthesis of 3D scenes and humans! (37 selected out of 2300 applications).

Julian is greatful for his advisor Gerard Pons-Moll and collaborators!

Julian's fellowship profile: here.

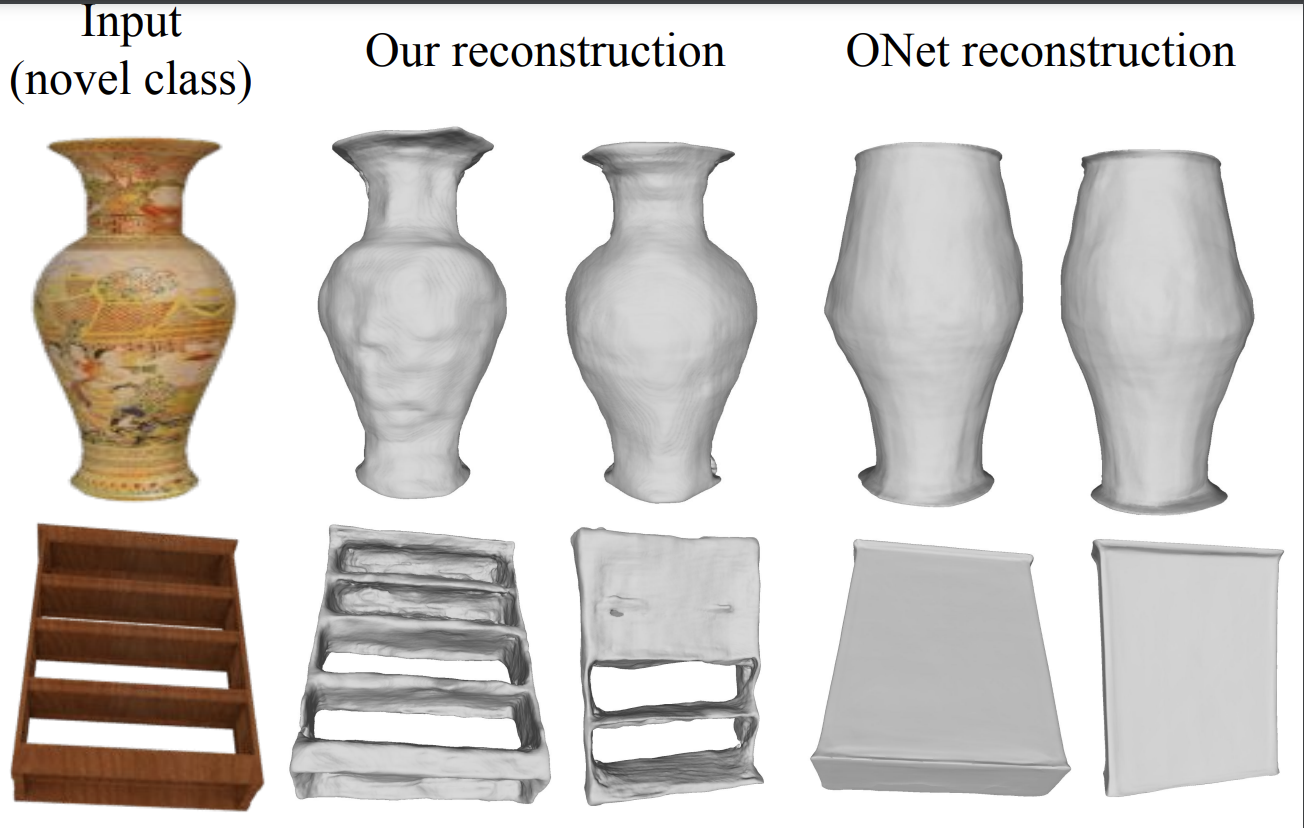

Winners of CVPR SHARP'21 Challenges

June 2021

Congratulations to Julian Chibane and Gerard Pons-Moll for winning both 3D completion tracks of the CVPR'21 SHARP challenge on 3D shape recovery from partial textured 3D scans.

We won with IF-Nets (Chibane et al. CVPR'20) to complete geometry and texture (Chibane et al. ECCV-Workshop'20) plus an effective trick to retain even more detail of the input. In our experience, the model is easy to use, and works really well for a wide variety of 3D completion and reconstruction tasks. Code available here.

HPS has been shortlisted for best paper award at CVPR 2021!

June 2021

Only 32 papers out of 7000+ submissions have been selected as award candidates. Congratulations! Data and code are now available here!

Big News! The group is moving to Tuebingen

March 2021

Gerard Pons-Moll accepted a W3 professorship at the University of Tübingen at the department of Computer Science. The group will focus on learning, vision and graphics, with focus on capturing and synthesizing human and object shape apperance, as well as learning digital humans which can move and interact with the 3D world. More infos here.

4 Papers (1 oral) accepted at #CVPR2021!

March 2021

Pdfs, data and code will be available soon! here!

Topics are:

1) HPS: Capturing and self-localizing humans in large 3D scenes with IMUs and a head mounted camera (Oral).

2) Stereo Radiance Fields: generalizing NeRF to multiple scenes using classical stereo principles.

3) SMPLicit: An implicit based representation of people in layered clothing.

4) D-Nerf: generalizing NeRF to dynamic scenes.

Congratulations students and collaborators!2 Papers accepted at NeurIPS (1 Oral, 1 Poster)

August 2020

Papers are:

-1) LoopReg: Self-supervised Learning of Implicit Surface Correspondences, Pose and Shape for 3D Human Mesh Registration

-2) Neural Unsigned Distance Fields for Implicit Function Learning

Congrats to the team, and thanks to the reviewers for helping us in improving our papers!Code, data and papers will be available here.

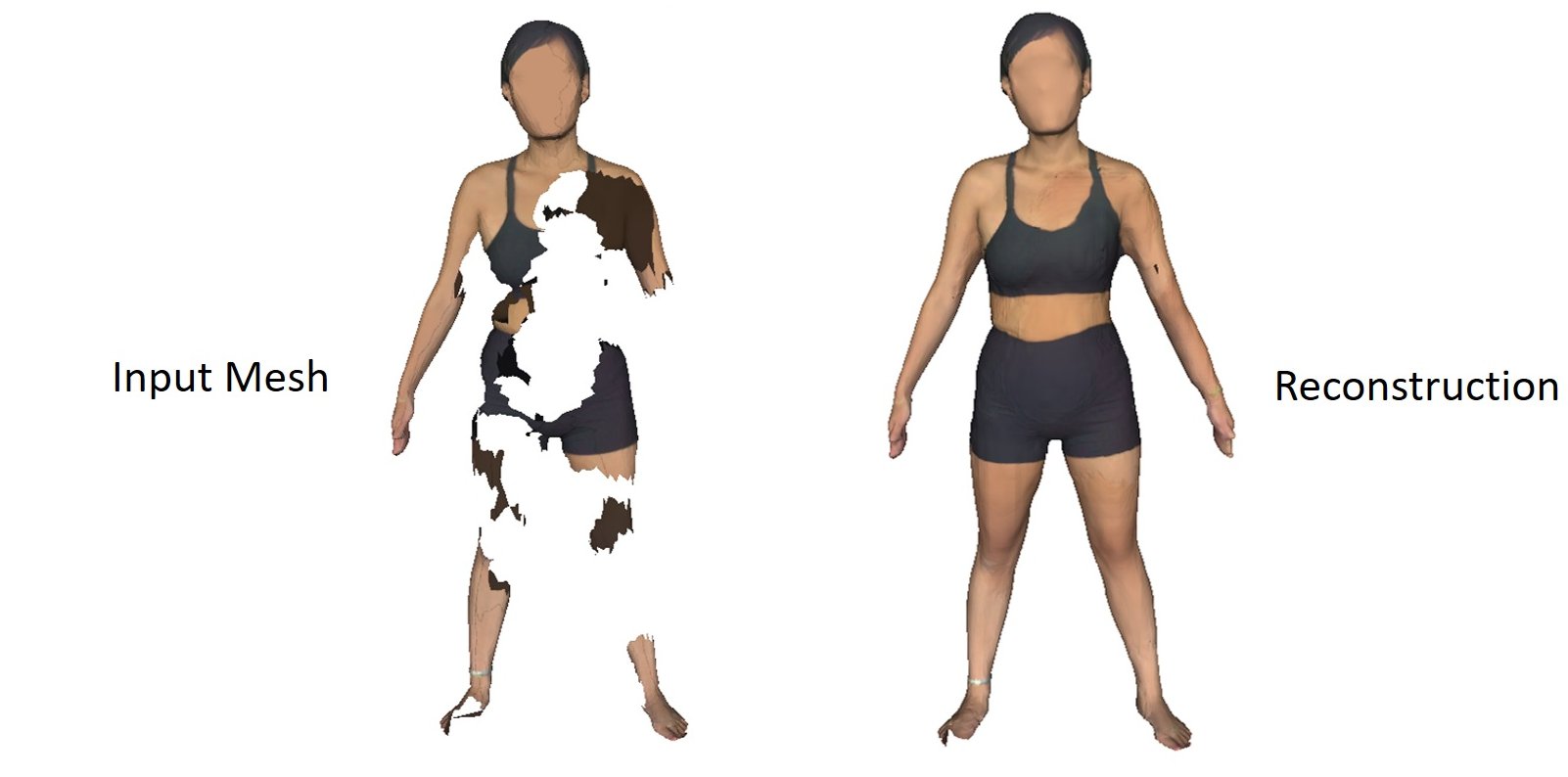

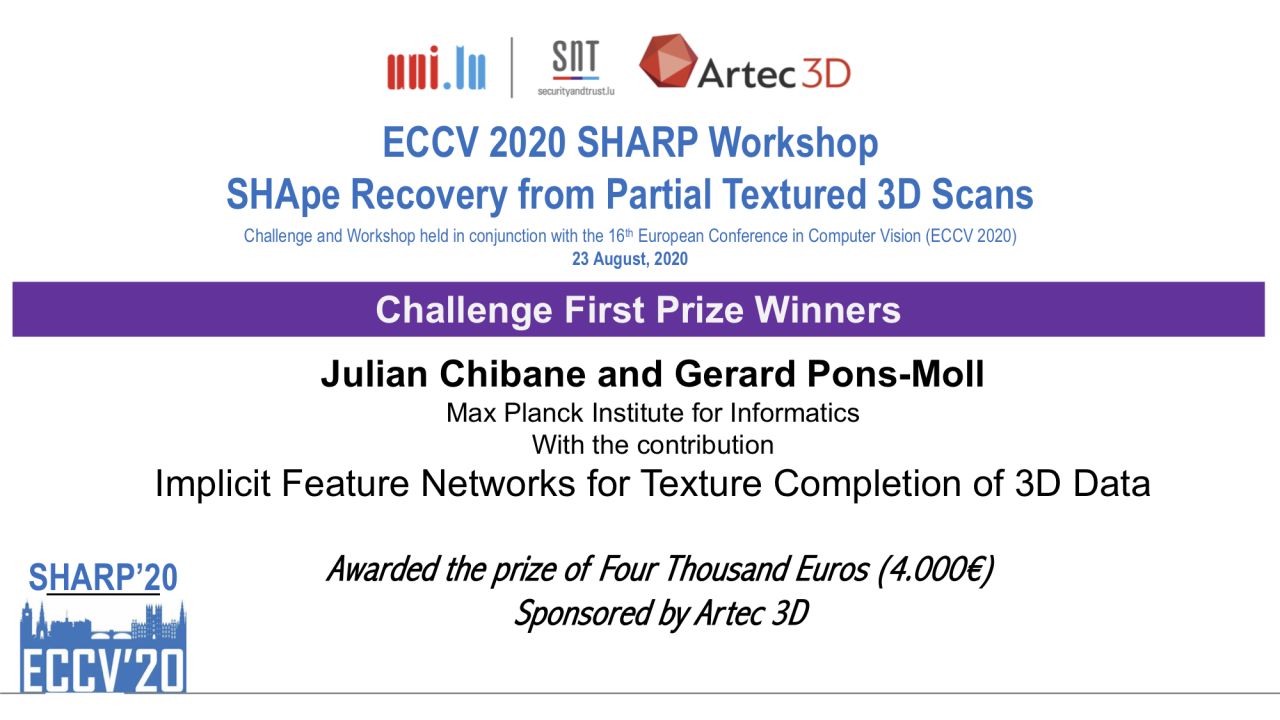

Winners of all ECCV SHARP'20 Challenges

August 2020

Congratulations to Julian Chibane and Gerard Pons-Moll for winning all tracks of the ECCV'20 SHARP challenge on 3D shape recovery from partial textured 3D scans.

We extended IF-Nets (Chibane et al. CVPR'20) to complete geometry and texture (Chibane et al. ECCV-Workshop'20). In our experience, the model is easy to use, and works really well for a wide variety of 3D completion and reconstruction tasks. Code available here.

5 Papers (2 orals, 3 posters) accepted at #ECCV2020!

July 2020

Pdfs, data and code will be available soon! here!

Topics are: 1) Combining implicit functions and meshes for reconstruction, 2) A model of cloth sizing, 3) Unsupervised disentanglement of shape and pose from meshes, 4) A human implicit function parameterized by pose (NASA) and 5) Monocular 3D object detection in driving scenes. Congratulations students and collaborators!

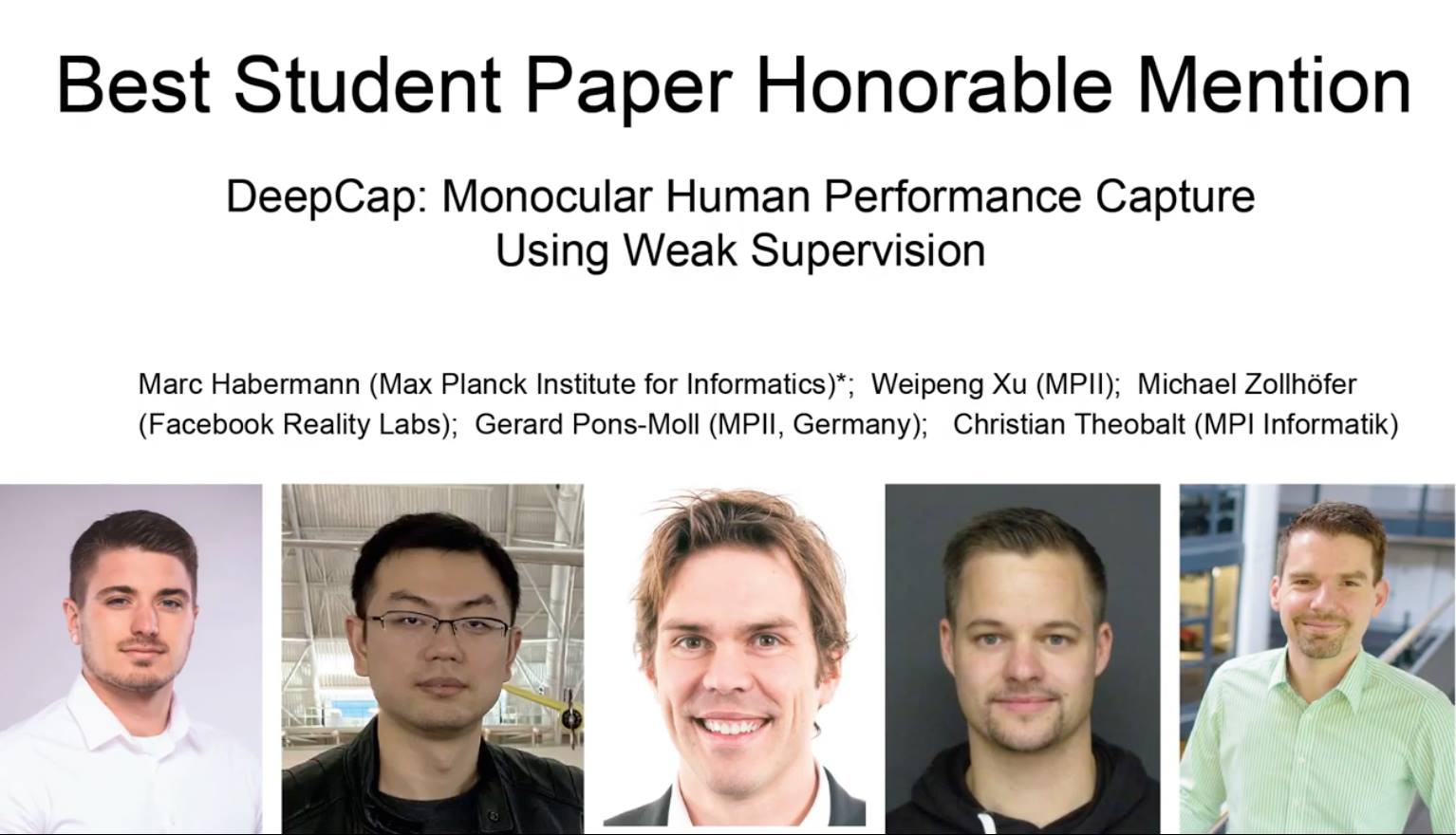

CVPR20 Best Student Paper Honorable Mention!

June 2020

Congrats to collaborators, and thanks to the reviewers for useful feedback, and the awards committee for selecting our paper among many others worthy of the prize--we are honored.

3DPW Challenge and Workshop at ECCV 2020 featured on the front-page of computer vision news

June 2020

Gerard Pons-Moll, Angjoo Kanazawa, Michael Black and Aymen Mir talk about challenges in perceiving people in 3D, see the interview. Thanks Ralph Ansarouth!

Deadline is approaching, participate!: 3DPW Challenge.

5 Papers (2 orals, 3 posters) accepted at #CVPR2020!

February 2020

Pdfs, data and code are available here!

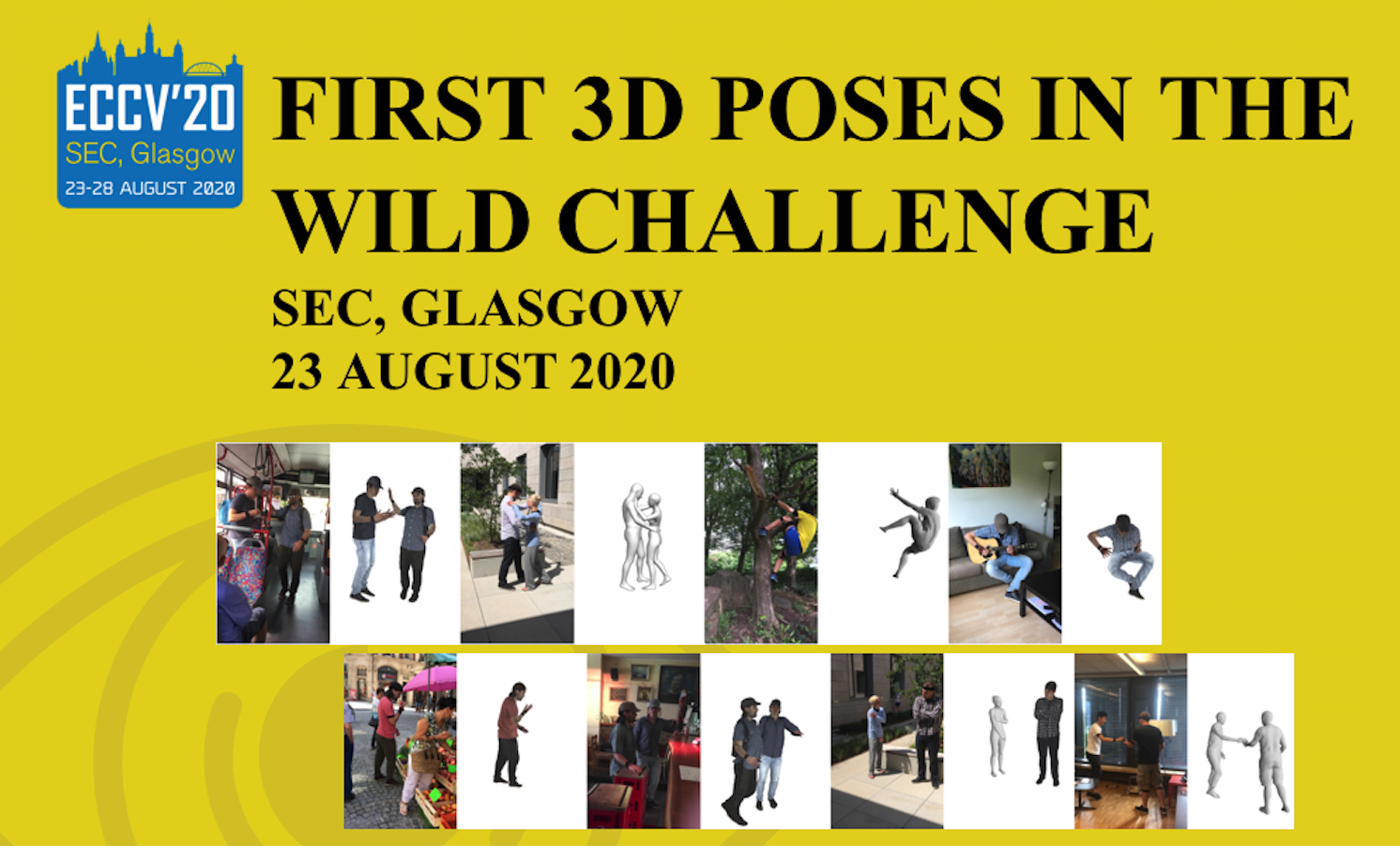

Congratulations to all collaborators!3DPW Challenge and Workshop at ECCV 2020.

February 2020

Gerard Pons-Moll will organize the first 3DPW Challenge and Workshop at ECCV 2020 together with Angjoo Kanazawa, Michael Black and Aymen Mir.

The aim of the workshop and challenge is to establish a benchmark to quantiatively evaluate 3D pose and shape human reconstruction methods in the wild using the 3DPW dataset.

Area Chair CVPR 2021 and 3DV 2020.

February 2020

Gerard Pons-Moll will serve as Area Chair for CVPR 2021

He will also serve as Area Chair for 3DV 2020

Area Chair ECCV 2020 and service.

2020

Gerard Pons-Moll will serve as Area Chair for ECCV 2020

He will also serve as Area Chair for Face and Gesture FG 2020

Selected as an Outstanding Reviewer of CVPR'19

German Pattern Recognition Award

September 2019

Gerard Pons-Moll has been awarded the 2019 German Pattern Recognition Award -- the highest prize awarded annualy to one researcher by the German Society of Computer Vision and Machine Learning.

Congrats to the group and collaborators!

3 papers accepted at ICCV 2019! 1 paper at 3DV'19

July 2019

Pdfs, data and code here!

-1) Multi-Garment Net: Learning to Dress 3D People from Images

-2) Tex2Shape: Detailed Full Human Body Geometry from a Single Image

-3) AMASS: Archive of Motion Capture as Surface Shapes

-4) 360-Degree Textures of People in Clothing from a Single Image

Congratulations to all co-authors!Google Faculty Research Award

February 2019

Gerard Pons-Moll received a Google Faculty Research Award.

We have open positions in our group: Job Offers

Gerard Pons-Moll received a Google Faculty Research Award.

We have open positions in our group: Job Offers3 papers accepted to CVPR 2019!

February 2019

Paper pdfs, videos and code coming soon!1) Learning to Reconstruct People in Clothing from a Single RGB Camera

2) SimulCap : Single-View Human Performance Capture with Cloth Simulation

3) In the Wild Human Pose Estimation using Explicit 2D Features and Intermediate 3D Representations

Congratulations to all co-authors!

Emmy Noether starting grant!

November 2018

Gerard Pons-Moll has been awarded an Emmy Noether grant. The grant, called like the group "Real Virtual Humans" (RVHu), conists of 1.6 Million euros to conduct research at the interesction of vision, graphics and learning with special focus on analyzing and digitizing humans.

3 papers accepted at 3DV 2018!

1 Paper won the best student paper award!

September 2018

Pdfs and videos available!

-Neural Body Fitting: Unifying Deep Learning and Model Based Human Pose and Shape Estimation

3DV Best Student Paper Award

-Detailed Human Avatars from Monocular Video

-Single-Shot Multi-Person 3D Pose Estimation From Monocular RGB

1 paper at ECCV'18

September 2018

Recovering Accurate 3D Human Pose in The Wild Using IMUs and a Moving Camera

New dataset with ground truth 3D poses in the wild! Download

We have released the first (and most challenging) dataset of natural scenes with multiple people with accurate 3D pose and shape! The RGB video includes scenes like taking the bus, walking on the city, shoping, sports, etc.

PeopleCap'18 workshop at ECCV'18

September 14th 2018

Gerard Pons-Moll and Jonathan Taylor will organize the second edition of PeopleCap.

The workshop will bring together researchers in the fields of 3D human modelling, reconstruction and tracking. Submission deadline is July 20th

Science article about our CVPR'18 paper

April 13th 2018

One of our CVPR papers has been covered in the Science magazine.

We developed a method to create a 3D avatar from a few seconds of video footage. See the Paper.

Latest Publications

ActionPlan: Future-Aware Streaming Motion Synthesis via Frame-Level Action Planning

in , 2026.

MoLingo: Motion-Language Alignment for Text-to-Motion Generation

in Conference on Computer Vision and Pattern Recognition (CVPR), 2026.

CARI4D: Category Agnostic 4D Reconstruction of Human-Object Interaction

in Conference on Computer Vision and Pattern Recognition (CVPR), 2026.