Abstract

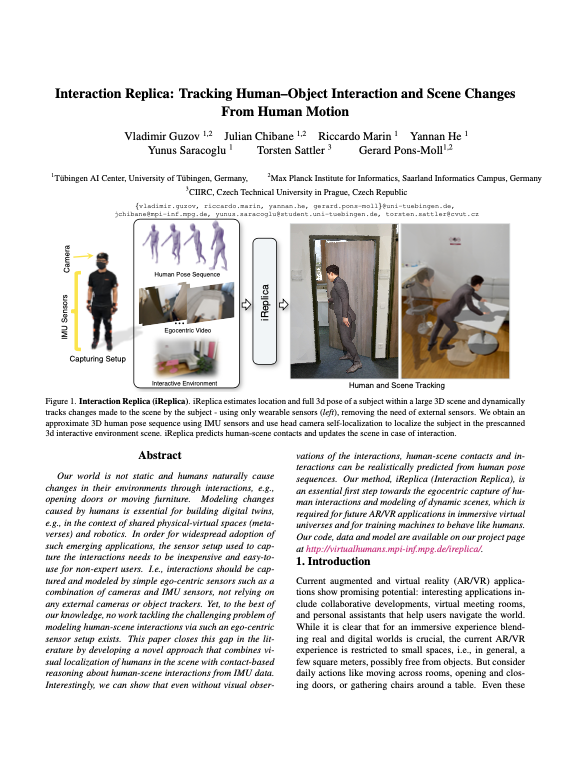

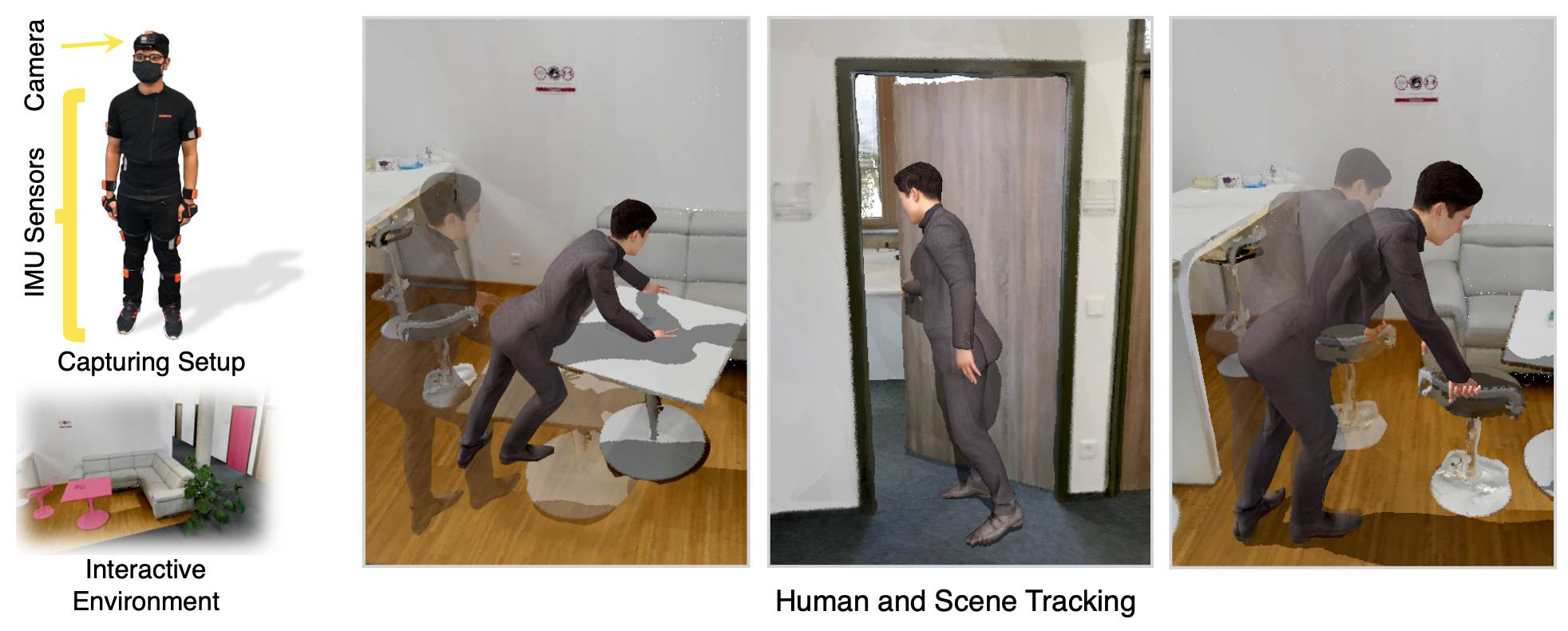

Our world is not static and humans naturally cause changes in their environments through interactions, e.g., opening doors or moving furniture. Modeling changes caused by humans is essential for building digital twins, e.g., in the context of shared physical-virtual spaces (metaverses) and robotics. In order for widespread adoption of such emerging applications, the sensor setup used to capture the interactions needs to be inexpensive and easy-to-use for non-expert users. I.e., interactions should be captured and modeled by simple ego-centric sensors such as a combination of cameras and IMU sensors, not relying on any external cameras or object trackers. Yet, to the best of our knowledge, no work tackling the challenging problem of modeling human-scene interactions via such an ego-centric sensor setup exists. This paper closes this gap in the literature by developing a novel approach that combines visual localization of humans in the scene with contact-based reasoning about human-scene interactions from IMU data. Interestingly, we can show that even without visual observations of the interactions, human-scene contacts and interactions can be realistically predicted from human pose sequences. Our method, iReplica (Interaction Replica), is an essential first step towards the egocentric capture of human interactions and modeling of dynamic scenes, which is required for future AR/VR applications in immersive virtual universes and for training machines to behave like humans.

Our goal

Given the wearable sensors and the interactive environment, we want to estimate human and object positions and achieve visually plausible results.

We propose iReplica – a method of capturing human-object interactions using only wearable sensors. iReplica can track the human and the object pose from the user's motion only.

Paper, code and data

The problem

Main limitation of previous body-mounted capturing systems is their inability to register dynamic scene changes such as object movement, door opening and so on. Our goal is to fix that by modeling human-object interaction.

Interaction challenges

Such a task imposes different kinds of challenges, and interaction modeling is the toughest one. During the interaction, the object can be barely visible, or oppositely, it can obstruct the whole view, making it difficult to even tell whether the object is moving. This makes it hard to localize the object and model the interaction.

Our solution – iReplica

Our key idea: human motion can already tell us a lot about the interaction. But predicting the interaction from the human motion alone, we can track the object even when it is not visible in the head camera.

Results and comparisons

Citation

@inproceedings{guzov24ireplica,

title = {Interaction Replica: Tracking human–object interaction and scene changes from human motion},

author = {Guzov, Vladimir and Chibane, Julian and Marin, Riccardo and He, Yannan and Saracoglu, Yunus and Sattler, Torsten and Pons-Moll, Gerard},

booktitle = {International Conference on 3D Vision (3DV)},

month = {March},

year = {2024},

}Acknowledgments

Special thanks to RVH team members, and reviewers, their feedback helped improve the manuscript. The project was made possible by funding from the Carl Zeiss Foundation. This work is supported by the Deutsche Forschungsgemeinschaft (DFG, German Research Foundation) - 409792180 (Emmy Noether Programme, project: Real Virtual Humans), German Federal Ministry of Education and Research (BMBF): Tübingen AI Center, FKZ: 01IS18039A and the Czech Science Foundation (GAČR) EXPRO (grant no. 23-07973X). Gerard Pons-Moll is a member of the Machine Learning Cluster of Excellence, EXC number 2064/1 - Project number 390727645. Julian Chibane is a fellow of the Meta Research PhD Fellowship Program - area: AR/VR Human Understanding. Riccardo Marin has been supported by the European Union’s Horizon 2020 research and innovation program under the Marie Skłodowska-Curie grant agreement No 101109330. Website is based on the StyleGAN3 website template.